NSF CAREER: Scaling Deep Reinforcement Learning for Societal-Scale Cyber-Physical Systems

Funded by the National Science Foundation under Award CNS-2542543

This project will develop artificial intelligence techniques to improve the operation of large-scale infrastructure systems, such as smart transportation networks and electric power grids, which are essential to modern life. These complex systems, known as societal-scale cyber-physical systems, integrate physical infrastructure with thousands of sensors, computing devices, and actuators. Due to their scale and distributed nature, managing these systems in real time poses a significant challenge. To address this challenge, the project will leverage deep reinforcement learning, a form of artificial intelligence that learns optimal decision-making strategies directly from data. By improving the efficiency and reliability of critical infrastructure systems, the research will further the national interest through reducing traffic congestion, improving emergency response times, and increasing the stability of the power grid. This project will also develop a highly skilled science, technology, engineering, and mathematics (STEM) talent pipeline. These development activities will include creating interactive, game-based learning modules for K-12 students, developing an interdisciplinary graduate course on AI and cyber-physical systems, and providing training materials to help community partners effectively adopt artificial intelligence technologies.

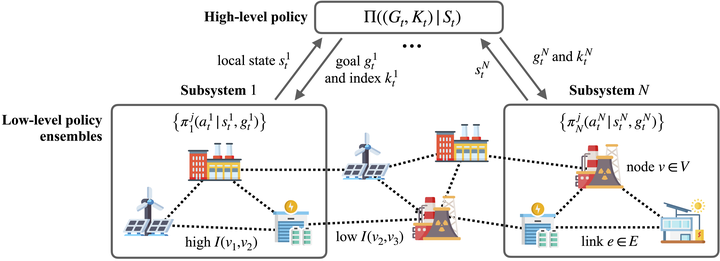

This project will advance the foundations of sequential decision-making for societal-scale cyber-physical systems (CPS) by addressing the fundamental scalability and communication challenges faced by existing deep reinforcement learning (DRL) approaches. The scope of the research will encompass theoretical and algorithmic innovations to develop a hierarchical and communication-aware DRL framework, which will be tailored to the vast state-action spaces and distributed nature of societal-scale CPS. First, the project will introduce a cyber-physical feudal reinforcement learning framework that will provide automated, data-driven system decomposition. This approach will partition a complex cyber-physical system into tractable subsystems based on their underlying dynamic cyber-physical couplings. The framework will then train a hierarchy of DRL policies to control the system: a high-level coordination policy will generate abstract but physically achievable goals for ensembles of decentralized, low-level subsystem policies. Second, to address the communication constraints of distributed CPS, the project will extend the feudal DRL framework to be communication-aware. To minimize bandwidth overhead, the framework will integrate physics-informed causal abstraction to compress state representations and trigger communication only when necessary. To handle communication disruptions and missing data, the framework will employ adversarial curriculum learning to train robust policies that will rely on belief states and learned intrinsic motivation to ensure graceful degradation. The project will evaluate the proposed innovations using high-fidelity simulations, real-world datasets from transportation and power systems, and community partnerships, rigorously demonstrating the intellectual contributions required to deploy scalable, data-driven control policies across critical distributed infrastructure.